OSMO: Open-vocabulary Self-eMOtion Tracking

Dec 8, 2025·,,,,,,,·

1 min read

Mohamed Abdelfattah

Bugra Tekin

Fadime Sener

Necati Cihan Camgoz

Eric Sauser

Shugao Ma

Alexandre Alahi

Edoardo Remelli

A CVPR 2026 submission introducing a new task, benchmark, and model for open-vocabulary self-emotion tracking from egocentric multimodal streams.

Abstract

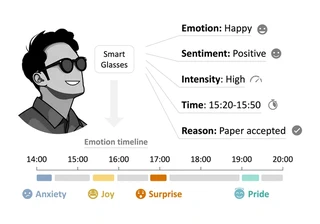

We introduce the novel task of egocentric self-emotion tracking, which aims to infer an individual’s evolving emotions from egocentric multimodal streams such as voice, visual surroundings, semantic subtext, and eye-tracking signals.

To establish this research direction, we present:

- OSMO dataset, a large-scale annotation effort on 110 hours of existing bilingual smart-glasses recordings, establishing the largest egocentric emotion dataset and the first with subject-wise emotion timelines.

- OSMO benchmark, a suite of five tasks (emotion recognition, sentiment, intensity, localization, and reasoning), that redefine emotion understanding as a continuous, context-aware process rather than discrete classification of trimmed videos.

- OSIRIS, a large multimodal model that tracks emotions over time by reasoning over the user’s personal emotion history, current expressions, and egocentric observations.

Extensive evaluations show that OSIRIS achieves state-of-the-art performance, delivering, for the first time, coherent emotion timelines from egocentric data. Dataset, model, and code will be fully open-sourced upon publication.

Submission Metadata

- Submission date: 08 Dec 2025

- Last modified: 11 Dec 2025

- Track: Conference, Senior Area Chairs, Area Chairs, Reviewers, Authors

- License: CC BY 4.0

- Subject Area: Datasets and evaluation

- Student Paper: Yes

- Keywords: Self-emotion tracking, emotion recognition, Multimodal Large Language Model, Multimodal learning