OSKAR: Omnimodal Self-supervised Knowledge Abstraction and Representation

Dec 1, 2025·,,·

1 min read

Mohamed Abdelfattah*

Kaouther Messoud*

Alexandre Alahi

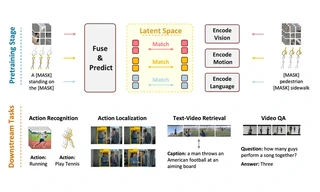

A multimodal self-supervised foundation model for learning unified representations across modalities by predicting masked multimodal features in latent space.

Overview

OSKAR (Omnimodal Self-supervised Knowledge Abstraction and Representation) is a research project focused on learning robust multimodal representations without labels. The method abstracts knowledge across modalities in a shared latent space and trains through masked multimodal feature prediction.

Authors

- Mohamed Abdelfattah*

Kaouther Messoud* Alexandre Alahi

* Equal contribution

Venue

NeurIPS 2025

One-Sentence Summary

OSKAR is a self-supervised multimodal foundation model that learns in the latent space by predicting masked multimodal features.

Links

BibTeX

@inproceedings{abdelfattahoskar,

title={OSKAR: Omnimodal Self-supervised Knowledge Abstraction and Representation},

author={Abdelfattah, Mohamed O and Messaoud, Kaouther and Alahi, Alexandre},

booktitle={The Thirty-ninth Annual Conference on Neural Information Processing Systems}

}

Project Status: ✅ Published at NeurIPS 2025

Project Page: multimodal-oskar.github.io

Paper: NeurIPS Virtual Poster