OSMO: Open-vocabulary Self-eMOtion Tracking

Mohamed Abdelfattah

,

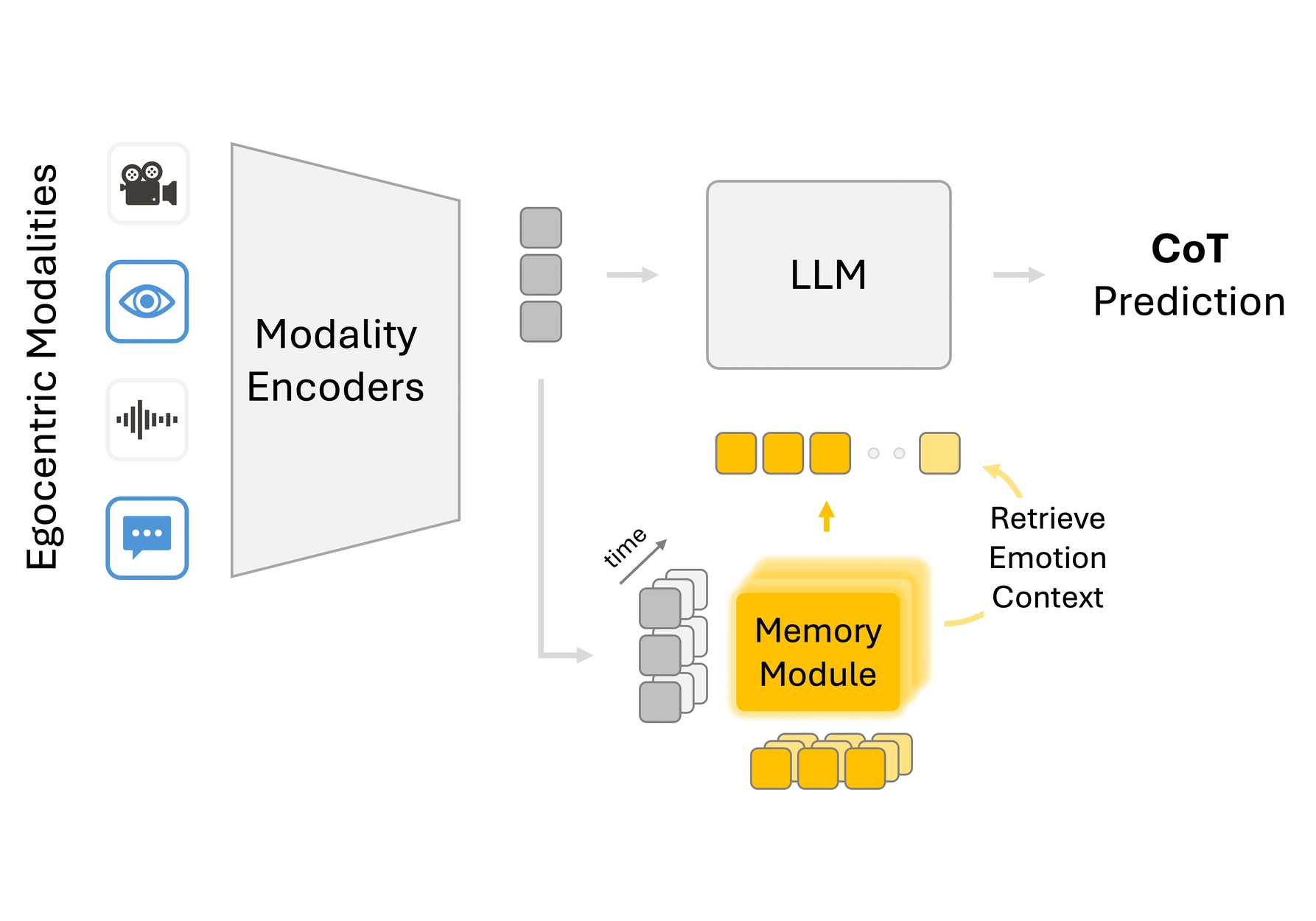

OSIRIS is an egocentric multimodal LLM for continuous, open-vocabulary human-state tracking from smart glasses.

Call me Os

Mohamed Abdelfattah

,

OSIRIS is an egocentric multimodal LLM for continuous, open-vocabulary human-state tracking from smart glasses.

Mohamed Abdelfattah*

,

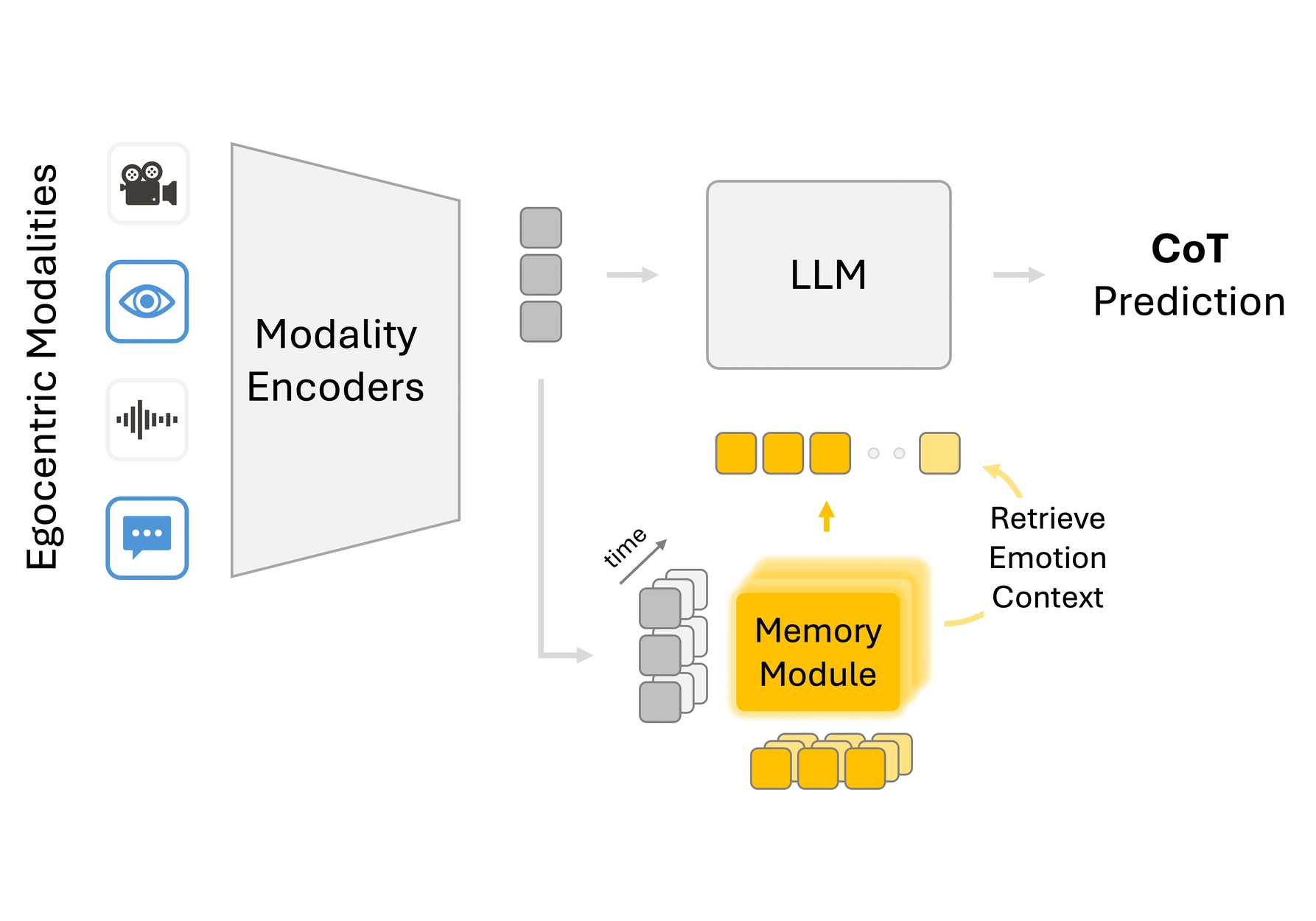

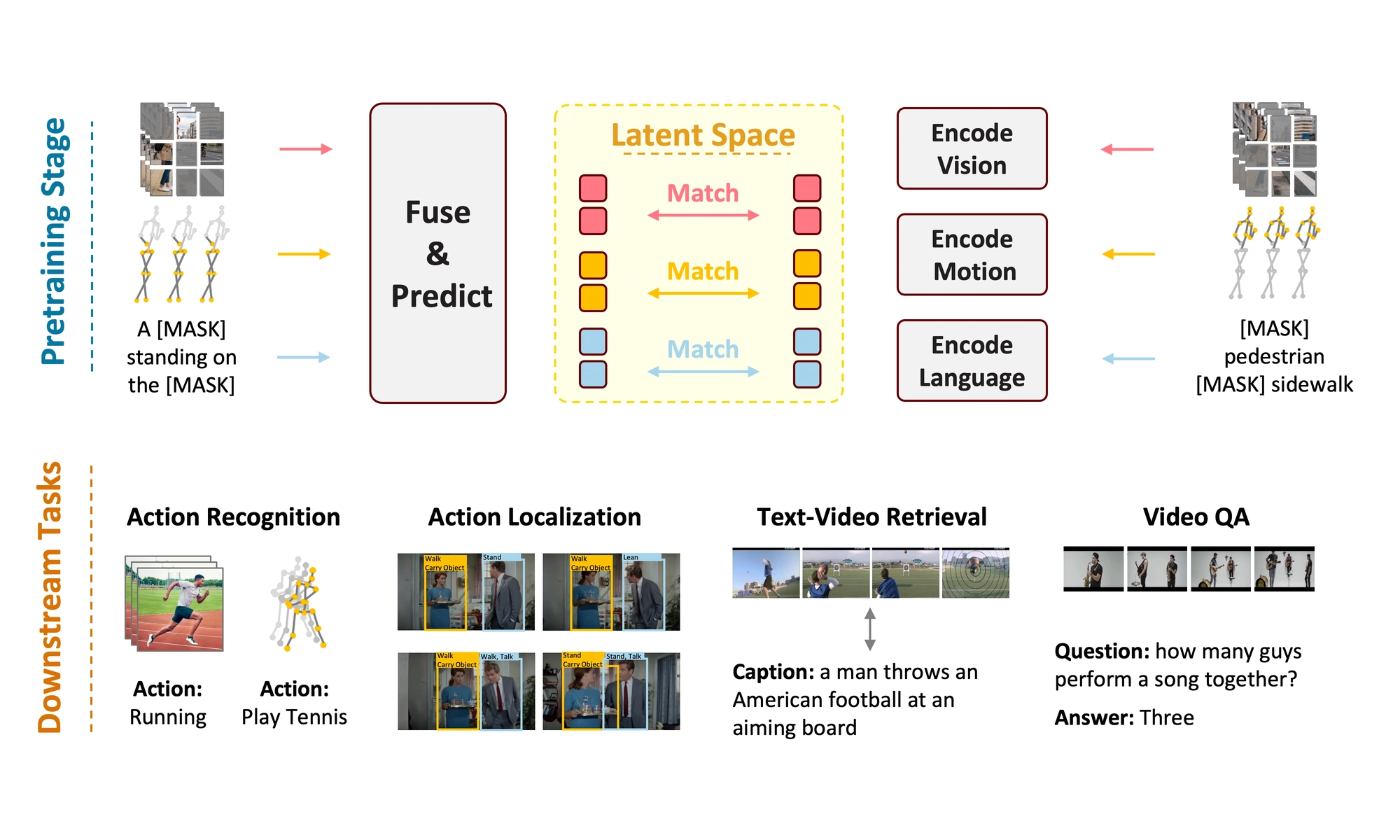

OSKAR is a self-supervised multimodal foundation model that learns in the latent space by predicting masked multimodal features.

Mohamed Abdelfattah

,

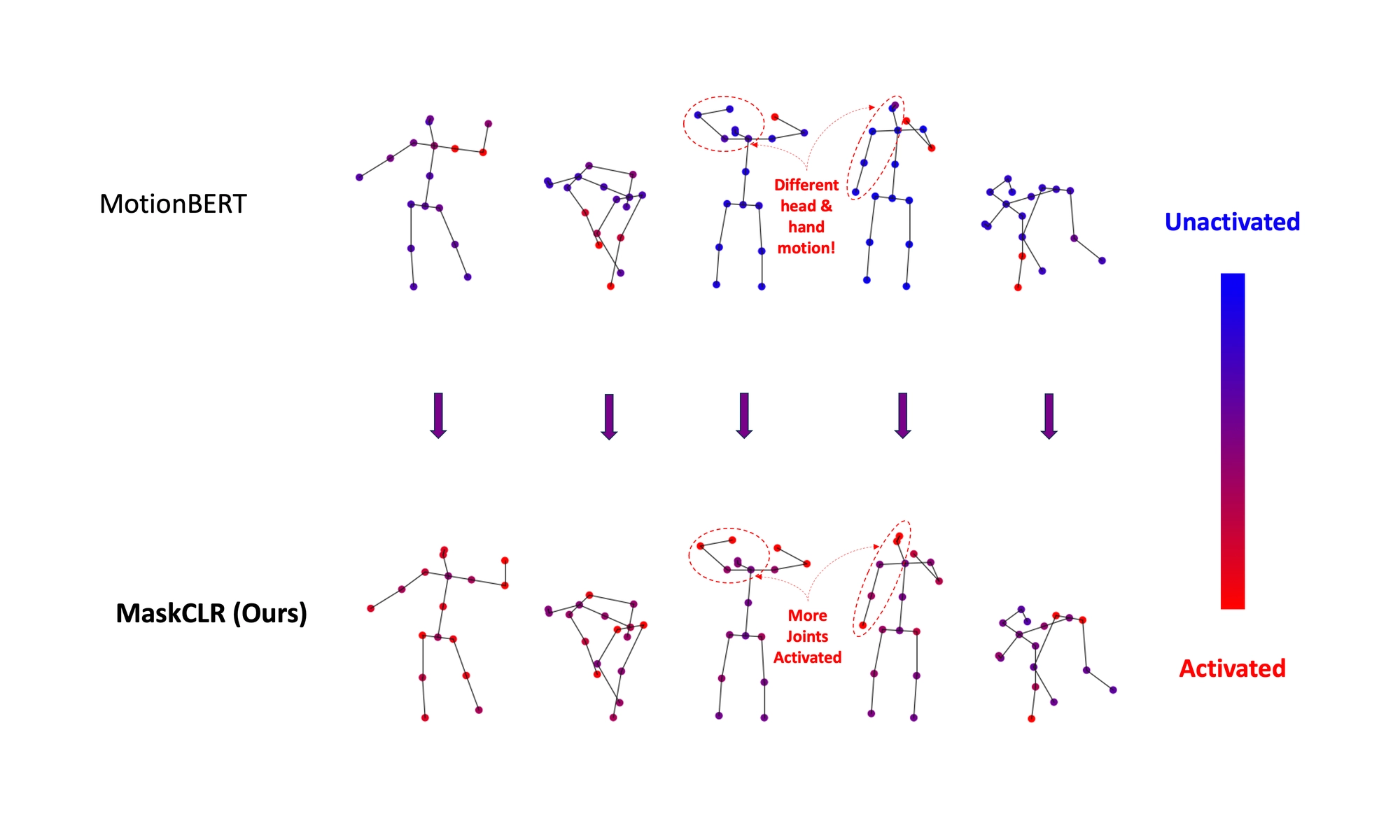

MaskCLR improves the robustness of transformer-based action recognition methods against noisy and incomplete skeletons.

Mohamed Abdelfattah

,

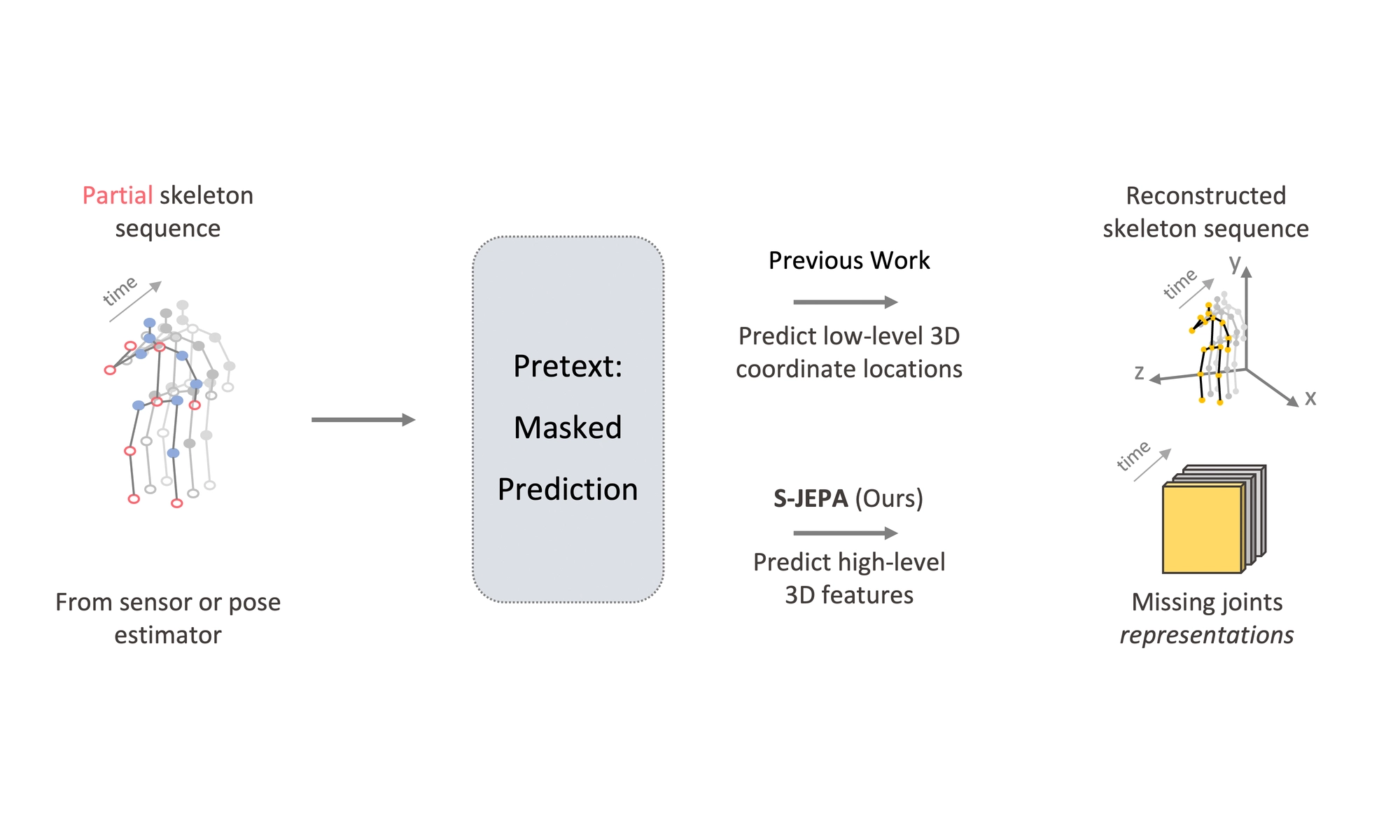

S-JEPA is an instantiation of JEPA for self-supervised skeletal action recognition.

We take a step towards computer-aided waste detection and present the first in-the-wild industrial-grade waste detection and segmentation dataset, ZeroWaste.

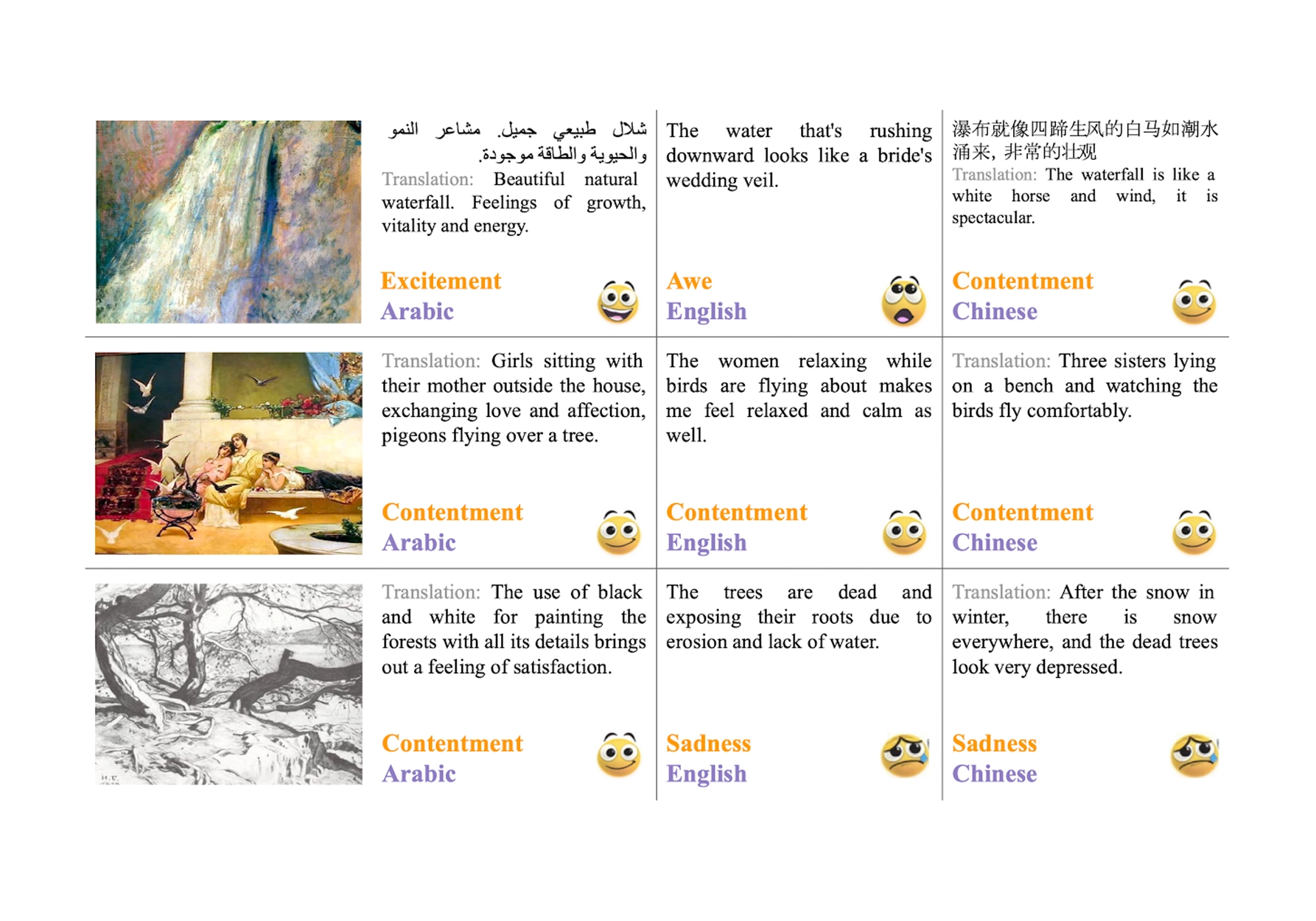

This paper introduces ArtELingo, a new benchmark and dataset, designed to encourage work on diversity across languages and cultures.

Mohamed Abdelfattah

,

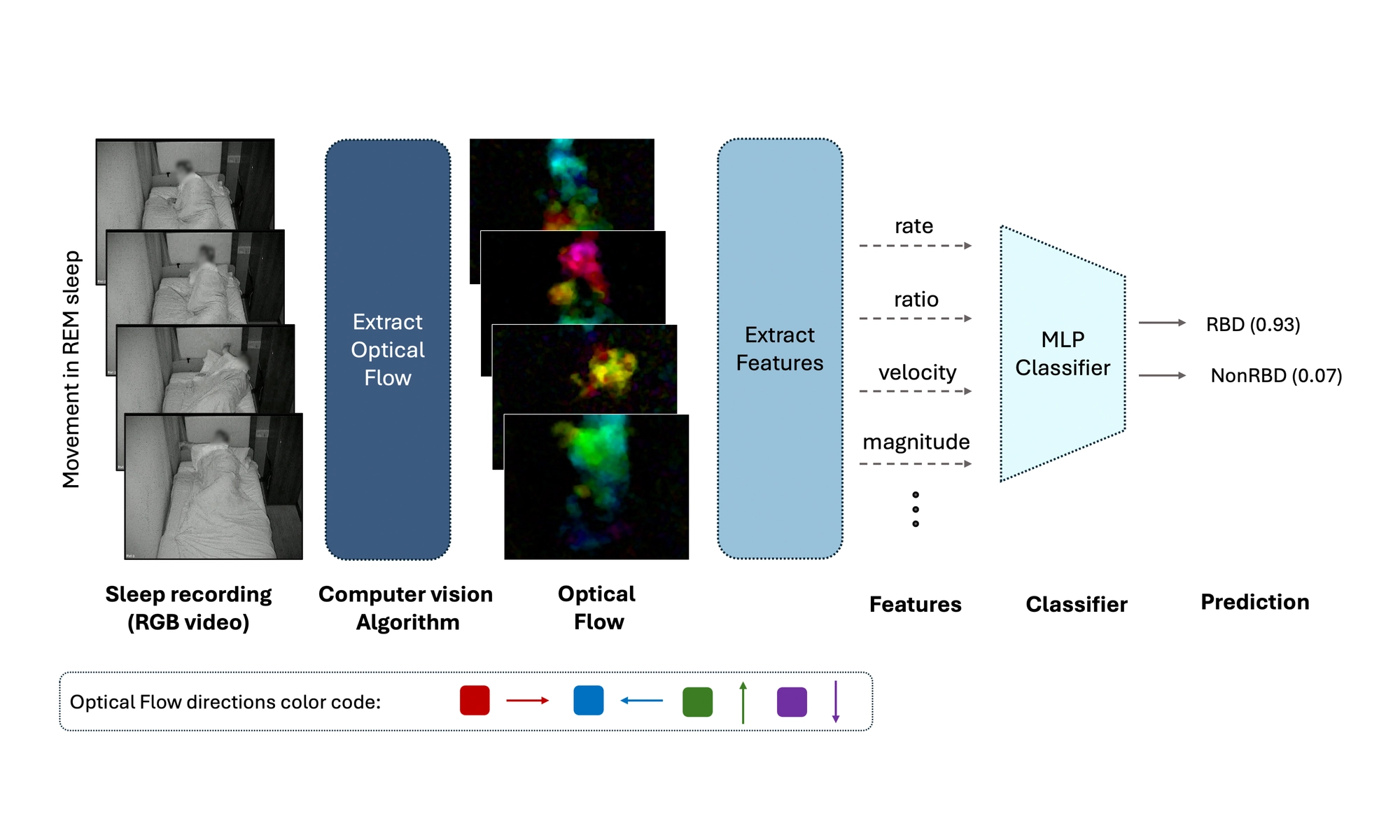

A computer-vision pipeline using conventional 2D sleep-lab cameras to automatically detect iRBD from REM movement dynamics with up to 91.9% accuracy.

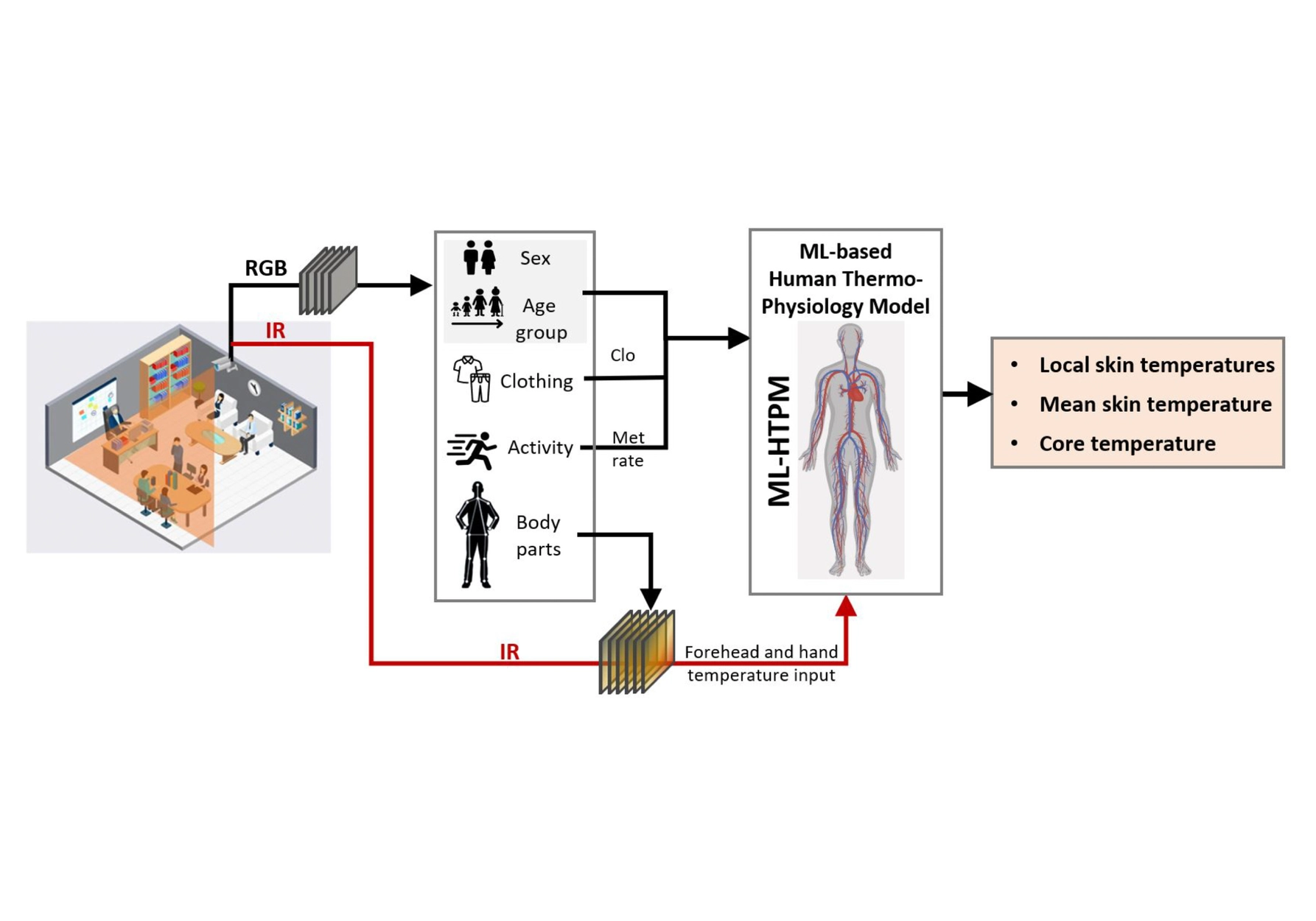

A machine-learning human thermo-physiology model (ML-HTPM) developed to predict thermal response.

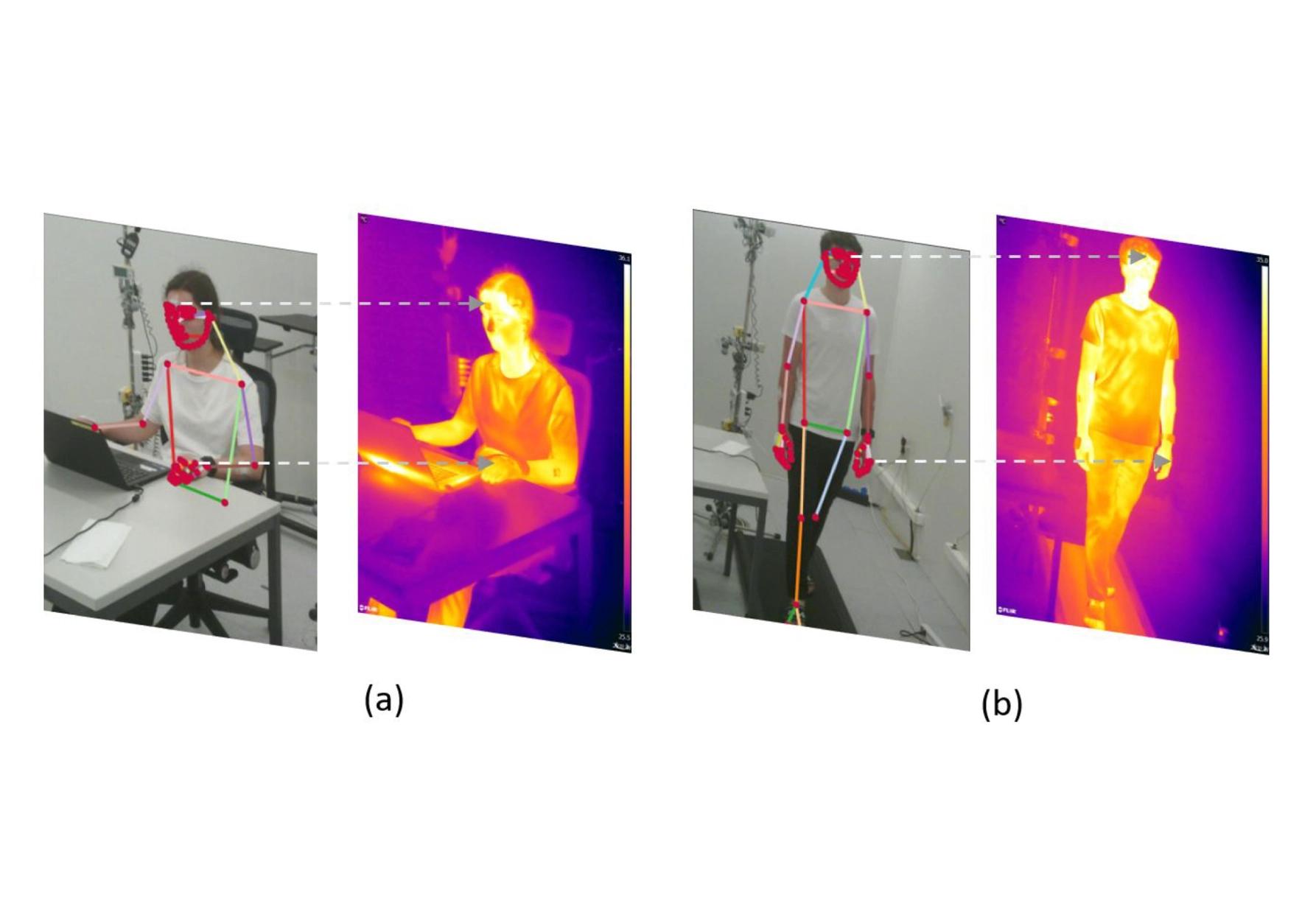

In this study, we applied multi-modal non-intrusive computer vision algorithms to extract personal features defining human thermal comfort.

A curated collection of milestones and recognitions.

Awarded the AUC PA Cup for the class of 2022 for top academic and extracurricular achievements.

AUC

High Academic Achievement (Top 10% of Graduating Class) in SSE Honours Assembly.

AUC

Undergraduate Research Award, 4,000 USD.

AUC

Ranked among the top 10 ROV teams in the Middle East.

MATE ROV Regional Competition

Ranked 1st in the CSCE programming contest.

AUC

Named Highest Achiever and Reader of the Year.

AUC

EPFL

AUC

AUC

When not researching

Usually powered by caffeine, mountain air, old music, good food, and excellent ways to avoid being indoors.

Favorite mindset

“

It's more interesting to live not knowing than to have answers that might be wrong.

I am always happy to discuss research collaborations, internships, and full-time opportunities in computer vision and multimodal learning. If you are hiring, collaborating, or want to chat about ideas, feel free to reach out.